There are lots of optimization opportunities in a statically typed, purely functional language. This post describes an approach that improves the performance of off-the-shelf Haskell list functions by about 25% on average. The code is available as a drop-in replacement for Data.List.

This is part of an ongoing series on high-performance Haskell programming. Related posts include stream fusion for lists, programming the inliner, Judy arrays and Haskell, making binary parsing faster and applying stream fusion to arrays.

Background

Statically typed, purely functional code is a sweet spot for optimization hackers. All that information available at compile time lets us write some pretty radical transformations, and know they are safe. Stream fusion, for example, relies on having type and effect information available statically, and uses that to make complexity-changing rewrites of your code. Clever algebraic transformations such as call-pattern specialization can make some pretty aggressive performance improvements. We can also make the runtime more efficient: by introducing parallelism, or again, exploiting type and effect information via pointer tagging. All this is easier in a strongly, statically typed and pure setting — there’s just less stuff you have to worry about when doing the transformations.

This post introduces an optimization technique based on types: self-optimizing polymorphic data types. When instantiated to a particular type, the code presented will generate both a custom, specialized data structure, and specialized functions over that type. The result is a general mechanism to improve the data density, and thus performance, of parametrically polymorphic data structures (where the same code operates generically on any type), such as lists, trees and finite maps. The general concept should be useful in any language with parametrically polymorphic types, and its implementation in Haskell, via class-associated data types is particularly elegant (requiring no compiler modification).

Polymorphism

Parametric polymorphism is a great programmer tool. It improves expressivity, by letting the programmer use the same code at any possible type, yet still retains type safety. For example, the list type is a polymorphic type, and the length function computes the length of any lists, no matter what the element type is.

-- The list type: either empty, or cons, holds any type 'a'

data [] a = [] | a : [a]

Prelude> :t length

length :: [a] -> Int

The type system ensures that we can never write length to depend on a property of the element type, ensuring the code is maximally generic. It is simply not possible to mess with the elements of the list, given the type. Any attempt to do so will be a compiler error. This gives us some strong guarantees of abstraction, which we will take advantage of for optimization purposes.

Such polymorphic types and functions are flexible, but we pay a cost for this. In order for the code to work on any type ‘a’, we must ensure that all values have a uniform representation. In most languages, this is via an object or closure – some reference to heap allocated data – and Haskell is no exception. All polymorphic components will be stored as pointers to heap allocated thunks.

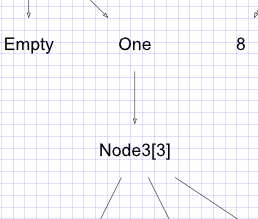

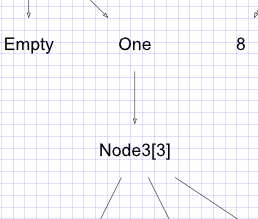

You can see this tradeoff most vividly in this picture of a list of pairs:

The uniform representation means that all values are represented via pointers to values, so the same code (e.g. map, filter) will work on any element type. But look .. we have cons nodes with pointers to pair nodes, which in turn point to integer nodes. (Also note the explicit sharing that is visible – the integer 1 and 2 are shared between multiple uses – made possible by purity).

Such a structure is generic, but has poor data density. If the compiler could see that the integer nodes hold word-sized values, it could unpack them into their constructors. GHC can already unpack monomorphic types, removing all indirection and ‘thunkiness’ associated with the data, via a manual unpack pragma at the data declaration site:

data PairIntInt = Pair {-# UNPACK #-} Int {-# UNPACK #-} Int

The Int values will now just be stored directly as word-sized fields in the Pair heap object. GHC can also infer the location of these pragmas if you use strict fields, and enable the -funbox-strict-fields optimization:

data PairIntInt = Pair !Int !Int

However, this technique only works on monomorphic fields. It does not make sense for polymorphic fields, since they have variable size, and the compiler does not (except in very specific circumstances) have access to information about what types are in use.

To unpack, say, all elements of a list of pairs, we need to instead generate a custom type:

data ListPairs = Empty

| Cons !Int !Int ListPairs

This will happily represent each node with all elements stored locally, but is no longer polymorphic – so any functions have to be written specially for this type. The result however, is a much denser data representation:

Note that unpacking changes the evaluation strategy of the data. Those elements are now strict. The spine of the list is still lazy, however, so it is still just as useful a type for control structures and generators. GHC is remarkably flexible about the control the programmer has over the evaluation strategy (strict, lazy, mixture), so we’ll take advantage of that in this library to produce a spine-lazy, element strict list type that is able to do this unpacking and specialization for us. I encourage you to explore mixed strict and lazy structures as well.

(An aside: these graphs were generated directly from the live Haskell heap via vacuum – another great new tool for Haskell development. I’ve not written about this yet, but you can see a video of it in use here. You can trace any Haskell heap structure to a graph representation, saving it as svg or png, or inspecting it live from the REPL).

So, how do we teach GHC to do this transformation automatically, and how do we do that without sacrificing code reuse — I only want to write the list library once!

Class-associated data types

Our problem can be stated as:

- when we use a data structure at a particular element type, we require both custom functions, and a custom data type, to be generated

This gives us an insight into how to produce a solution. Type classes in Haskell are precisely the mechanism for having per-type functions. And a recent major extension to type classes has been the addition of class-associated data types (in their most general form, as type families).

These two features together will allow us to specialize the container type at each element type, and generate specialized functions for each use, removing “the uniform-representation tax” and improving performance across the board.

Implementation

Where previously, the data type for lists is defined as:

data [] a = [] | a : [a]

we will instead associate the data type with a class, and leave the implementation to later: We also have to lift the introduction and elimination forms for the type into the class (as we have no explicit constructors or pattern matching anymore):

class AdaptList a where

data List a

-- | The empty list

empty :: List a

-- | Prepend a value onto a list

cons :: a -> List a -> List a

-- | Is the list empty?

null :: List a -> Bool

-- | The first element of the list

head :: List a -> a

-- | The tail of the list

tail :: List a -> List a

Now we just need to write some functions over this abstract list type. First, conversion, to and from “real” lists:

-- | /O(n)/, convert an adaptive list to a regular list

toList :: AdaptList a => List a -> [a]

toList xs

| null xs = []

| otherwise = head xs : toList (tail xs)

-- | /O(n)/, convert an adaptive list to a regular list

fromList :: AdaptList a => [a] -> List a

fromList [] = empty

fromList (x:xs) = x `cons` fromList xs

Note how we have to replace explicit pattern matching with calls to the elimination forms (head, tail, uncons), and constructors are replaced with calls into the type class methods (cons, empty). We will see that GHC is able to inline away all the type class dictionaries, resulting in specialized code when used at any specific type.

Some general functions on lists: map, fold, filter:

-- | /O(n)/. 'map' @f xs@ is the list obtained by applying @f@ to each element

-- of @xs@

--

map :: (AdaptList a, AdaptList b) => (a -> b) -> List a -> List b

map f as = go as

where

go xs

| null xs = empty

| otherwise = f (head xs) `cons` go (tail xs)

{-# INLINE map #-}

-- | /O(n)/. 'foldl', applied to a binary operator, a starting value (typically

-- the left-identity of the operator), and a list, reduces the list

-- using the binary operator, from left to right

--

foldl :: AdaptList b => (a -> b -> a) -> a -> List b -> a

foldl f z0 xs0 = go z0 xs0

where

go !z xs

| null xs = z

| otherwise = go (f z (head xs)) (tail xs)

{-# INLINE foldl #-}

-- | /O(n)/. 'filter', applied to a predicate and a list, returns the list of

-- those elements that satisfy the predicate

--

filter :: AdaptList a => (a -> Bool) -> List a -> List a

filter p xs0

| null xs0 = empty

| otherwise = go xs0

where

go xs

| null xs = empty

| p x = x `cons` go ys

| otherwise = go ys

where x = head xs

ys = tail xs

{-# INLINE filter #-}

These implementations are essentially identical to the Haskell98 Prelude definitions, but with all constructors replaced with type class methods, and pattern matching replaced with “smart deconstructors”. Also, all recursive functions are written in worker/wrapper style, so they may be inlined (crucial to enable the function to be specialized).

So now we have an interface to lists and functions on them, but no concrete list type defined. We will do this via a class-associated type, with one instance for each element type we care about. This is a generic strategy, and so can be automated via some SYB-style generic code generation tricks. The result is a set of instances enumerating all the different specialized data representations statically:

instance AdaptList Int where

data List Int = EmptyInt | ConsInt {-# UNPACK #-}!Int (List Int)

empty = EmptyInt

cons = ConsInt

null EmptyInt = True

null _ = False

head EmptyInt = errorEmptyList "head"

head (ConsInt x _) = x

tail EmptyInt = errorEmptyList "tail"

tail (ConsInt _ x) = x

And so on for all the other types. Now, when we write a function on say, List Int, GHC will replace all our code with the specialized data type, where the Int elements are unpacked. All calls to the smart constructors and deconstructors will be replaced with the specialized versions as well.

We should thus get denser, faster list types and functions.

You can see the full implementation here, as well as the same technique applied to sums and products, and with the full list API filled out. I’m calling these “adaptive” data structures, as they adapt to a different shape based on how you use them.

Let’s benchmark and see if things get any faster. As usual, I will use criterion to do the measurement and graphing. The best open source benchmarking library around – makes benchmarking fun, the way QuickCheck makes testing fun.

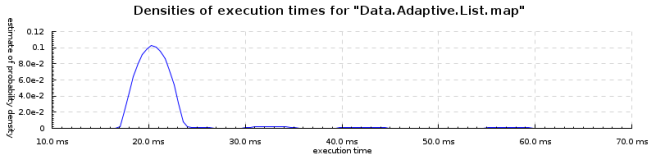

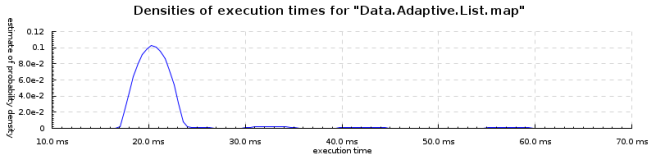

Benchmarks: map

In these benchmarks, I’m measuring the standard GHC 6.10 Data.List functions, and the base list data type, against the version on Hackage. In each case, I generate 100k random integers (using the mersenne-random generator), build a list from those numbers, and measure the time to traverse the structure using each pair of functions. Criterion takes care of running the functions hundreds of times until meaningful data can be extracted. The execution time probability graphs are presented here. Note that they’re on different scales each time.

So, the numbers. Specialized map is 34% faster. Mean goes from 31.9ms to 21.2ms. Garbage collection is reduced by 40% (which seems typical across the board for these functions.

Regular Haskell list type and functions:

Specializing list map::

Looking at the core, we see that when used at an Int type, we get a specialized loop with unboxed parameters generated (no additional un- or re-boxing is required to store values back into the data structure!). This is a transformation GHC can’t do automatically, due to the uniform representation requirement.

Here’s the core for ‘adaptive sum’, taken from ghc-core,

$wgo :: Data.Adaptive.List.List Int -> Int# -> Int#

$wgo xs n = case xs of

EmptyInt -> n

ConsInt y ys -> $wgo ys (+# n y)

Compared to regular lists, we see the additional unboxing of the list parameter that is required:

$wlgo :: Int# -> [Int] -> Int#

$wlgo n xs = case xs of

[] -> n

(y:ys) -> case y of

I# y# -> $wlgo (+# n y#) ys

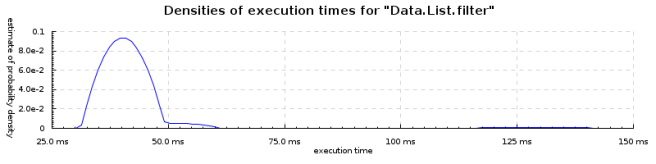

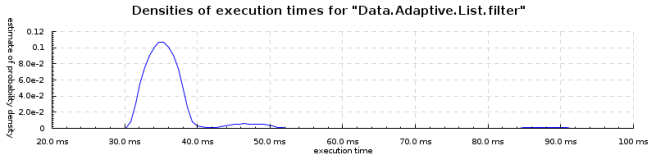

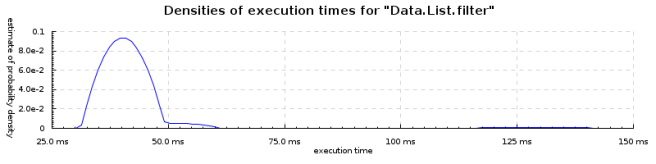

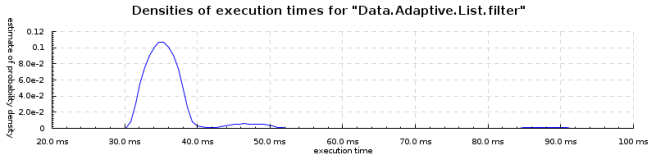

Benchmarks: filter

Filter is 15% faster. Mean goes from 42.5ms to 36.2ms.

Specialized version:

Specialized version:

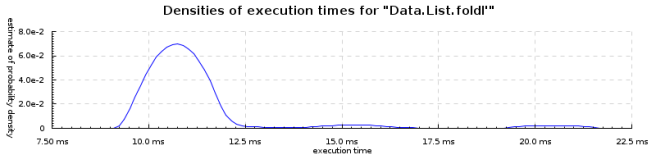

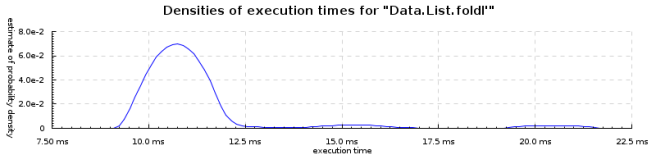

Benchmarks: foldl

Benchmarks: foldl

Reducing a list is 23% faster (sum, length, maximum, product, minimum). Mean goes from 11.2ms to 8.7ms.

and when specialized:

and when specialized:

Notes

One of the obivous questions is: what about fusion? Stream fusion is an entirely different automatic optimization that optimizes multiple traversals over a data structure into a single, more complicated traversal, dramatically improving time and space usage. The data structures and functions shown in this post complement fusion well — we can still fuse loops, on speciazlied data and functions, removing unnecessary traversals. Stream fusion also enables opportunities for specialization, which will no longer be of much use, as our data types have specialized themselves.

We also lose sharing in this approach. Sorry, but your Int fields will be copied by value. The approach makes most sense for small atomic types anyway, which may be unpacked, and these are precisely the types where sharing is unlikely to be helpful.

Essentially, we now treat the list type and constructors as an abstract view on to some custom, specialized representation. We may be able to regain pattern matching on the abstract structures via GHC’s view patterns. I’ve not tried this yet, but it looks easy enough.

The list implementation is a useful proof of concept — you can use it in your list-heavy programs today if you want. The most promising direction, though, in my view, is for container types such as IntMap and Sequence, where already the constructors are abstract, and there’s a small set of primitive operations, and we really care about data density. There may be some big wins for coalescing of nodes in IntMap, for example.

Results

In summary, we have a general approach to allow, for the first time, uniform specialization of both parametrically polymorphic functions and data, resulting in good speedups with only library modifications required. The Haskell type system, via class-associated type classes, is sufficiently expressive to describe abstract and concrete representation mappings, and the GHC optimizer (particular the inliner, and the unpacking pragma) are enough to precisely control the layout of data, along with the generation of custom functions for each type. The approach should give speedups in any language with a uniform representation policy, though it is certainly easier when you don’t need to modify the compiler.The approach here is very specific, and may be subsumed by more general global information optimization techniques, such as super compilation (coming to GHC). We also rely on the magic of the GHC Inliner to remove all traces of type class dictionaries.

The next steps will be to combine this with list fusion, to have the fastest lazy lists I know of, and to try it out on IntMap and friends, to yield faster polymorphic container types.

The result is satisfying: your Haskell code goes faster, while remaining high level and pure.

Further reading

This is the first time I’ve written down this approach, but the idea has been kicking around for a few months. You can see my WG2.8 talk on the subject here, and a video of my talking about initial experiments in this direction at the Utrecht hackathon. I’d be interested if readers know of any related work for this joint-specialization of data and function code, to eliminate the indirection cost of parametric polymorphism.

Specialized version:

Specialized version: Benchmarks: foldl

Benchmarks: foldl and when specialized:

and when specialized: