The Google Summer of Code is a great chance to work on open source projects as a student, and get training from some experience hackers, wonderfully sponsored by Google. Haskell.org will be participating for its 5th year.

If you’re thinking about working on Haskell projects, you should certainly be reading:

Here are some of the things to think about before you decide to submit a proposal to help out Haskell.org this summer, to help you make stronger proposals. This is purely my opinion, and might not necessarily reflect the opinion of all other mentors.

We have limited resources as a community, and the Google Summer of Code has been instrumental in bringing new tools and libraries to our community. Some notable projects from the past few years include:

- The GHCi debugger

- Improvements to Haddock

- Major work on Cabal

- Generalizing Parsec (parsec3)

- Shared Libraries for GHC

- Language.C

These student projects have gone on to have wide impact, or brought new capabilities to the Haskell community. The pattern has been towards work on the most popular tools and libraries, or work on new areas that Haskell programmers are demanding work on. Rarely do we fund development of applications in Haskell, instead concentrating on general infrastructure that supports all Haskell projects.

To succeed with your project proposal, you need to propose doing work on the most important “blockers” for Haskell’s use. Work that:

- Brings the most value to the community

- Addresses widespread need

- Will impact many other libraries and tools

- Doesn’t rewrite things in Haskell for their own sake

- Is feasible in 3 months

It can be hard to find projects of the right size — important enough the work will make a difference — and thus attract the attention of mentors (who vote on your proposal), but is still feasible to achieve in 3 months.

To help get a sense of the mood of what the community thinks is “hot” (though not necessarily important), we set up a crowd voting site for ideas on Reddit. But be wary, the crowd can get excited about silly things, and doesn’t necessarily have good business sense.

The list of projects can help you get a sense for what Haskellers are thinking about this year. Not everything here will is feasible for a summer project though, so be sure to get advice!

Some of the social factors to consider in your application:

- You have one or two mentors with good expertise in the area. Ideally you’re already lining up the mentors to help watch your work.

- You’re hanging out in #haskell or on the Haskell Reddit, your blog is

on Planet Haskell, or you’re going to be at the hackathons.

The more involved you are in the community, the more likely you’ll have a sense of what the most important work to propose is.

And of course you need to have skills to pay the bills:

- You should have demonstrated competence in Haskell (e.g. you’ve

uploaded things to Hackage) - Demonstrated discipline in open source — self-motivation

As a guide to what reviewers will be thinking about when reading your proposal, you should be able to answer (at least) the following questions from the proposal alone:

- What are you trying to accomplish?

- How is it done in Haskell now, and with what limitations?

- Are there similar projects in other languages? What related work is there?

- If successful, what difference will it make? Will it enable new Haskell business cases? Performance improvements to many libraries? Better accessibility of Hackage code?

- What are the mid-term and final results you’re hoping to achieve?

- How will the result of your work affect other projects?

So, now you know what we’re interested in, start writing that proposal!

lookup

lookup

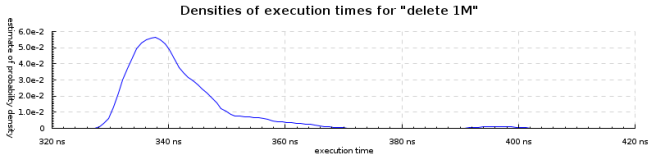

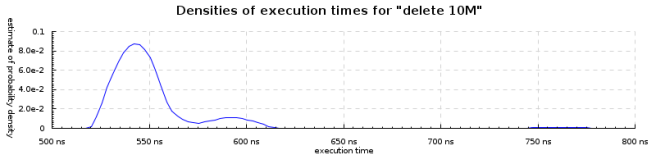

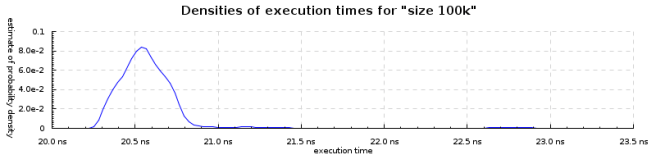

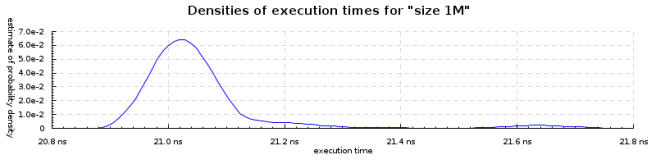

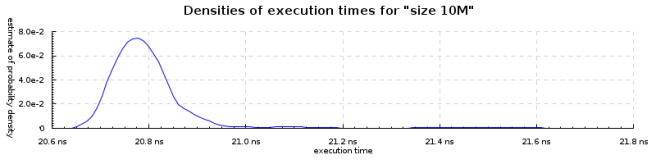

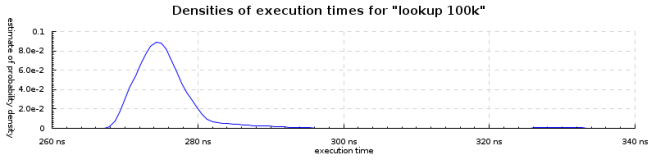

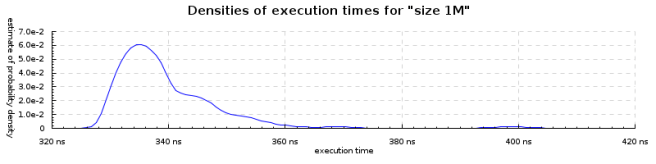

If we plot the mean lookup times against N, we’ll see the lookup time grows slowly (taking twice as long when the data is 100 times bigger). (N.B. I forgot to change the titles on the above graphs).

If we plot the mean lookup times against N, we’ll see the lookup time grows slowly (taking twice as long when the data is 100 times bigger). (N.B. I forgot to change the titles on the above graphs).