![]()

Today at HacPDX I did more work on the Judy bindings described yesterday. Here is judy 0.2.1 up (lunchtime release). These are bindings to the (in)famous judy arrays library, allowing Haskell keys and values to be stored in fast, mutable maps and hash structures. You can currently index via words (i.e. you have to hash keys yourself), and you can store value types or pointers to Haskell values allocated with stable pointers.

Today, I started by filling out the API some more, and benchmarking of how each API function scales. The functions I’m interested in are:

size :: JudyL a -> IO Int insert :: JA a => Key -> a -> JudyL a -> IO () lookup :: JA a => Key -> JudyL a -> IO (Maybe a) delete :: Key -> JudyL a -> IO ()

Let’s see how the performance of these functions scales with input. Benchmarking is performed via criterion (newly released to the public!) with random input keys and values generated from the mersenne twister.

Implementation methodology

My general strategy with constructing a high performance Haskell library is to:

- Pick a candidate benchmark set.

- Pick a good candidate data structure to make the benchmark faster.

- Carefully implement the type, or the binding to the foreign type and its representation in Haskell

- Carefully abstract out the unsafeties in any low level interface via type or language features.

- Function-by-function, check by eye the optimized code that GHC produces (with ghc-core) (in particular, inlining, specialization and unboxing).

- Function-by-function, benchmark the absolute performance as input grows (with criterion), with reference to model structures.

- Use QuickCheck to show correctness against a model, with HPC to confirm code coverage of the test.

- Document extensively with haddock.

- Collect ideas on how to generalize the interface (via type classes, more flexible types, representation-changing interfaces via type families).

size

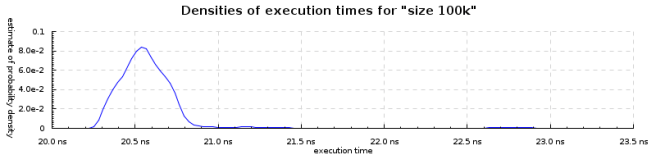

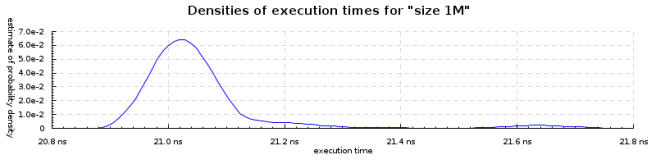

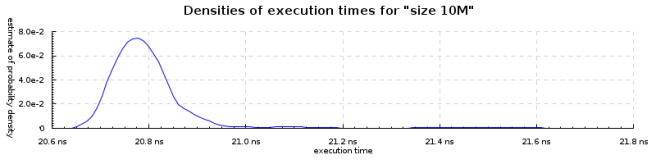

The size function tells you how many keys are in the Judy array. It has O(1) complexity, as we see from empirical measurement, here with randomized arrays with Int values and keys. We get the same cost whether it is 100k, 1M or 10 million elements in the structure. size is fast and scales.

lookup

lookup

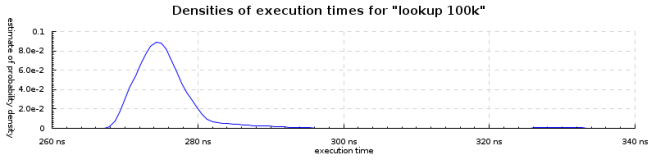

A common operation on associative arrays is lookup(). It better be fast, and not degrade.

If we plot the mean lookup times against N, we’ll see the lookup time grows slowly (taking twice as long when the data is 100 times bigger). (N.B. I forgot to change the titles on the above graphs).

If we plot the mean lookup times against N, we’ll see the lookup time grows slowly (taking twice as long when the data is 100 times bigger). (N.B. I forgot to change the titles on the above graphs).

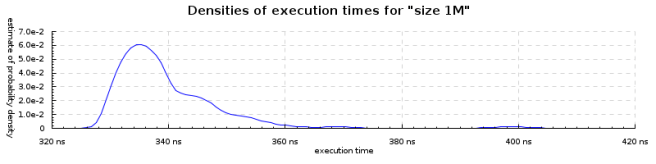

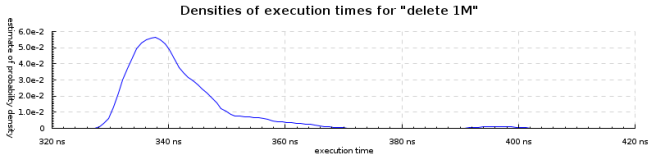

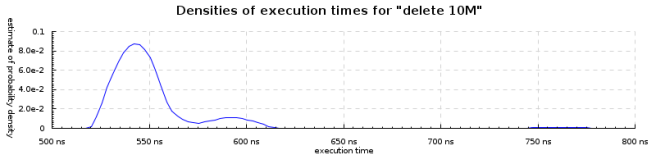

delete

Delete has an almost identical profile to insert, unsurprisingly. Time to delete an element grows very slowly with N, and in absolute terms if very fast.

If the collections are mutable, why bother with Haskell instead of the already popular languages that are full of mutable data structures?

Why not try for “fast immutable collections – see, Haskell really is better” ?

Honestly, this is about as sensble as “you can do OOP in C, you just have to use a lot of macros to do what a C++ compiler would do for you”. And that’s pretty retarded.

Bob. I think you’re mischaracterizing the goals of Haskell, one of which is simply to partition pure and impure code — not to disallow impure code.

We just want to keep mutable and pure (that is, code that is parallel race, deadlock and exception-safe by default) separate.

I think it is a somewhat common misconception that the intention of Haskell is to eradicate side effects and mutable structures. That is not the case. Effects are fact of (computing) life — we have to deal with them.

However, it is important to control effects. The special feature of Haskell is support for *statically* controlling effects.

@Bob: Chris Okasaki’s got that one pretty well covered with “Purely Functional Data Structures”, but sometimes, you get cases where a mutable data structure is exactly what you need. As Don and Manuel pointed out, using those structures in a safe way is what makes Haskell the world’s finest imperative programming language ™.

Is it possible to implement the equivalent of the Judy array library in Haskell and match the performance of C code?

Here is a somewhat critical about about judy: http://nothings.org/computer/judy/

@solrize POSIWID :-)

Every last time I hear about the wonders of Haskell, I hear one thing – immutable data is wonderful!

As an associated detail, I often also hear things like “the compiler does wonderful optimisations that make immutable data as fast, if not faster, than mutable data.”

If I mention something like “actually, Haskell has lots and lots of mutable state, it just gets isolated into its own sections” then I get told that I’m wrong, and that actually immutable data is perfect and fast.

If it’s a “common misconception” then its one that Haskell fans seem quite fond of.

Nice work. Have you tested your implementation with less random data? I’d expect most hash maps to perform ok with well distributed keys, but they can fall apart when given some structure.

Sam, certainly, most hash implementations can be defeated with hostile key selection:

http://www.cs.rice.edu/~scrosby/hash/

However, hashing is great for the wide class of applications that only deal with friendly data.

Don, any chance of supporting Judy’s string keys (JudyS and JudyHS types)? Those handle collision resolution and so forth, helping common use cases that aren’t so easy with JudyL by itself.

I second solrize’s inquiry about an implementation supporting String (Data.ByteString, Data.Text) keys.

Would it be difficult to do? I’d happily implement it myself, if I had any idea how to, not having coded against the FFI. Might someone more clueful than I be willing to mentor me in creating such a wrapper?

(Until them, I’m afraid I’ve resorted to OCaml for those utilities that require a fast hashmap-like store.)